# The Seat Is Dead. The Meter Is Hungry.

OpenAI just killed fixed-tier pricing for Codex teams. Cursor switched to credit-based billing in June 2025. Augment Code charges $15 per 24,000-credit top-up, billed in arrears. Seven of nine text models released in March 2026 were open-weight, which means the model itself is no longer the moat. The pricing model is.

Here is the math that should scare every engineering leader. According to Cledara, monthly spending on AI coding tools grew from $217,000 in January 2025 to $670,000 by March 2026. That is a 200% increase in 14 months for a single budget category. Companies did not cut other tools to make room. They stacked these on top. One developer profiled in recent research allocates $1,100 per month across Cursor Ultra, Claude Max, and supporting tools. That is $13,200 a year. For one person. With no cap.

Seat-based SaaS gave you a number you could put in a spreadsheet. Pay-as-you-go gives you a number that changes every month based on how hard your team thinks. And most teams are budgeting for it the same way they budget for office snacks.

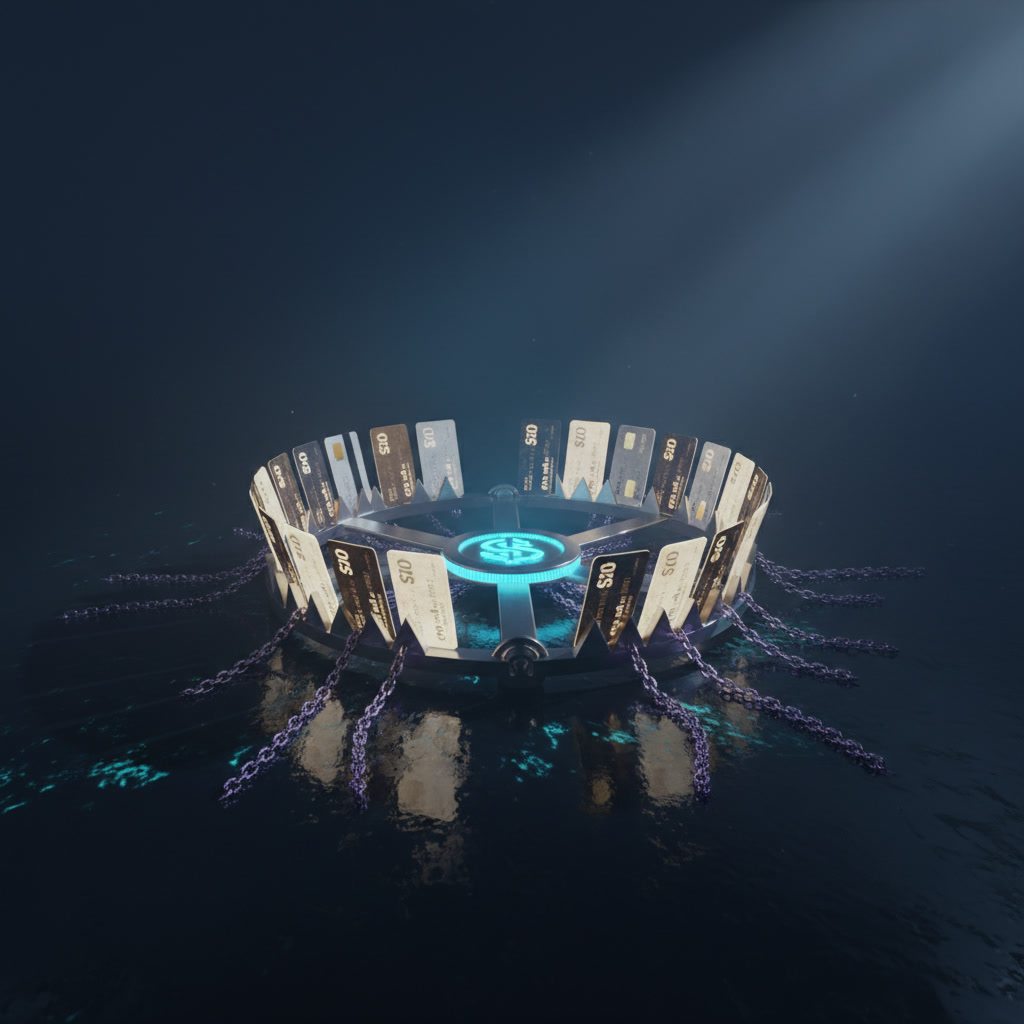

The Meter Trap

Here is the framework. I call it The Meter Trap. It works like this.

Every pay-as-you-go tool has three layers of cost. Layer one is the sticker price. Cursor Teams at $40 per user per month. These look like seat prices. They are not. They are floor prices. Layer two is the overage. Cursor charges $0.25 per million tokens beyond your allocation. GitHub Copilot charges $0.04 per premium request past your monthly cap of 300 to 1,000. Layer three is the stack tax. You do not use one tool. You use three or four. CodeRabbit for review at $12 per user per month. An LLM API for agentic workflows. Maybe a vector database for RAG.

The Meter Trap is what happens when you budget for layer one and get invoiced for all three. A 50-developer team on Cursor Teams looks like $24,000 per year on paper. The real number, according to comparative pricing research, lands closer to $240,000 when you account for overages and daily agent usage patterns. That is a 10x gap between the number in the procurement deck and the number on the invoice.

The trap is not that usage-based pricing is bad. The trap is that it disguises variable cost as fixed cost until the bill arrives.

The Golden Goose Has a Gas Meter

This is where the offer economics get interesting. And where most people get it wrong.

In any business, you have golden geese and golden eggs. The goose is the asset. The eggs are the output. Seat-based SaaS was a goose business. You bought the goose (the license), and it laid eggs (productivity) at a predictable rate. Your CFO could model it. Your procurement team could negotiate it. Barclays locked in GitHub Copilot at $30 per seat for 100,000 licenses. That is a goose deal.

Pay-as-you-go is an egg business. You pay per egg. The problem is that nobody knows how many eggs their developers will need next month. And the vendors like it that way.

Let me be blunt about what is happening. Vendors are shifting risk from their balance sheet to yours. When OpenAI replaces fixed tiers with usage-based pricing for Codex teams, they are not being generous by "lowering the entry barrier for smaller developer groups." They are making their revenue uncapped on the upside while making your costs unpredictable on the downside. That is not a partnership. That is an asymmetric bet, and you are on the wrong side of it.

The damaging admission here: this model is genuinely better for some teams. A three-person startup that codes 10 hours a week should not pay the same as a 200-person enterprise running agentic workflows 24/7. Usage-based pricing is fairer at the extremes. The problem lives in the middle, where 80% of teams sit.

Baseline annual cost: $114,000. Add premium request overages at moderate usage, and you are closer to $150,000. Stack on CodeRabbit at $12 per user per month ($7,200 per year) and Claude API costs for complex tasks ($5 to $25 per million tokens for Opus 4.6). A conservative all-in estimate lands between $180,000 and $250,000.

Cursor Teams: $40 per user per month. Baseline annual cost: $240,000. Daily agent users average $60 to $100 per month in effective cost. Heavy users blow past that. Add the stack tax and you are looking at $300,000 to $400,000.

Now compare that to what the same team spent 14 months ago. Probably $50,000 to $80,000 on JetBrains licenses and a handful of linters. The category has grown 3x to 5x, and the variance between light months and heavy months can swing 40% or more.

It is unclear whether most engineering organizations have updated their budgeting processes to handle this variance. The evidence from Cledara suggests they have not. They are adding AI coding tools on top of existing budgets, not restructuring how they forecast.

The lipstick-on-a-pig problem is real here too. Some vendors slap a "per seat" label on what is functionally a metered product. Run out, and the auto top-up kicks in at $15 per 24,000 credits. That is not a seat. That is a meter with a monthly minimum.

The honest framing: business is just hard, boring work. The exciting part is not picking the cheapest tool. The exciting part is building an internal system that tracks usage, forecasts spend, and renegotiates quarterly. Nobody wants to do that. That is exactly why you should.

Coder raising $90 million in Series C funding from KKR tells you where the smart money is going. Not into models. Into the platform layer that sits between developers and models, the layer that can flex with usage and give enterprises the control surface they need. The model is the commodity. The metering and management layer is the product.

2031

Pull back five years and the picture clarifies.

Seven of nine text models released in March 2026 were open-weight. That trend does not reverse. By 2031, the base capability of code generation will be effectively free. Not cheap. Free. The marginal cost of running an open-weight model on your own infrastructure will approach the cost of electricity and compute, which enterprises already budget for.

This means the pricing war happening right now is a transitional phenomenon. Proprietary vendors like OpenAI and Anthropic are racing to extract maximum revenue during the window where their models still hold a capability edge. Once open-weight models close that gap (and they are closing it faster than anyone expected), the entire value proposition shifts from "our model is smarter" to "our platform is stickier."

Salary buys furniture, equity buys your future. The same logic applies to tooling budgets. Teams that lock into proprietary, usage-based pricing today are buying furniture. Teams that invest in understanding their own usage patterns, building internal tooling around open-weight models, and negotiating enterprise agreements with pooled allocations are buying equity.

The asymmetric bet for engineering leaders is this: spend 20% more time on procurement and usage analytics now, and you save 40% on AI tooling costs over three years. The compounding effect of understanding your own consumption data is enormous. Most teams do not even know which developers are burning through credits and which ones barely touch the tool.

My read on this: the winners in 2031 will not be the teams with the best AI tools. They will be the teams with the best AI budgeting systems. The flywheel is measurement, then optimization, then negotiation, then reinvestment. The teams that start spinning that flywheel today have a five-year head start.

There is a beginner's mind problem here too. Most engineering leaders approach AI tooling the way they approached SaaS in 2015. Pick a vendor, roll it out, review annually. That cadence is too slow for a category where pricing models change quarterly and new competitors launch monthly. Impermanence is the defining feature of this market. Plan accordingly.

What to Build This Weekend

You do not need a finance degree to get ahead of The Meter Trap. You need a spreadsheet and 90 minutes.

Step one: audit your current AI coding tool spend. Log into your billing dashboards for every tool your team uses. Cursor, Copilot, Claude API, CodeRabbit, whatever. Pull the last three months of invoices. Write down the actual number, not the per-seat number from the pricing page.

Step two: calculate your effective cost per developer per month. Take total spend, divide by number of developers, divide by three months. Compare that number to the sticker price. If the gap is more than 30%, you have a Meter Trap problem.

Step three: identify your top 10% and bottom 10% of users by consumption. Most tools have usage dashboards. If yours does not, that is a red flag. The top 10% are probably driving 50% or more of your overages. The bottom 10% might not be using the tool at all. Both groups need a conversation.

Step four: set a monthly spend alert. Every billing dashboard has this. Set it at 120% of your average monthly spend. When it triggers, investigate before the invoice arrives.

Step five: evaluate one open-weight alternative. Tools like Aider and Cline run on pure API costs with no seat fee. For your bottom 50% of users (the ones who use AI coding tools lightly), an open-weight option at $0.25 per million tokens might cost 80% less than a $40 per month seat they barely touch.

This is not about switching tools. This is about knowing your numbers. The teams that know their numbers negotiate better deals, catch runaway spend early, and make informed decisions about when to upgrade and when to downgrade.

You can do this without a CS degree. You can do this without a CFO. First the audit, then the alerts, then the optimization. Build one tiny thing at a time. Your future self, staring at a Q3 invoice that makes sense, will thank you.