The same week, Coder raised $90 million in Series C funding from KKR. And 95% of developers now report using AI tools weekly, with the average power user stacking 2.3 tools at once.

That is three data points from one week. They tell the same story. The way developer teams pay for AI is changing structurally, and most teams are not ready for what that means for their budgets or their freedom to switch tools later.

Here is why, and what to do about it.

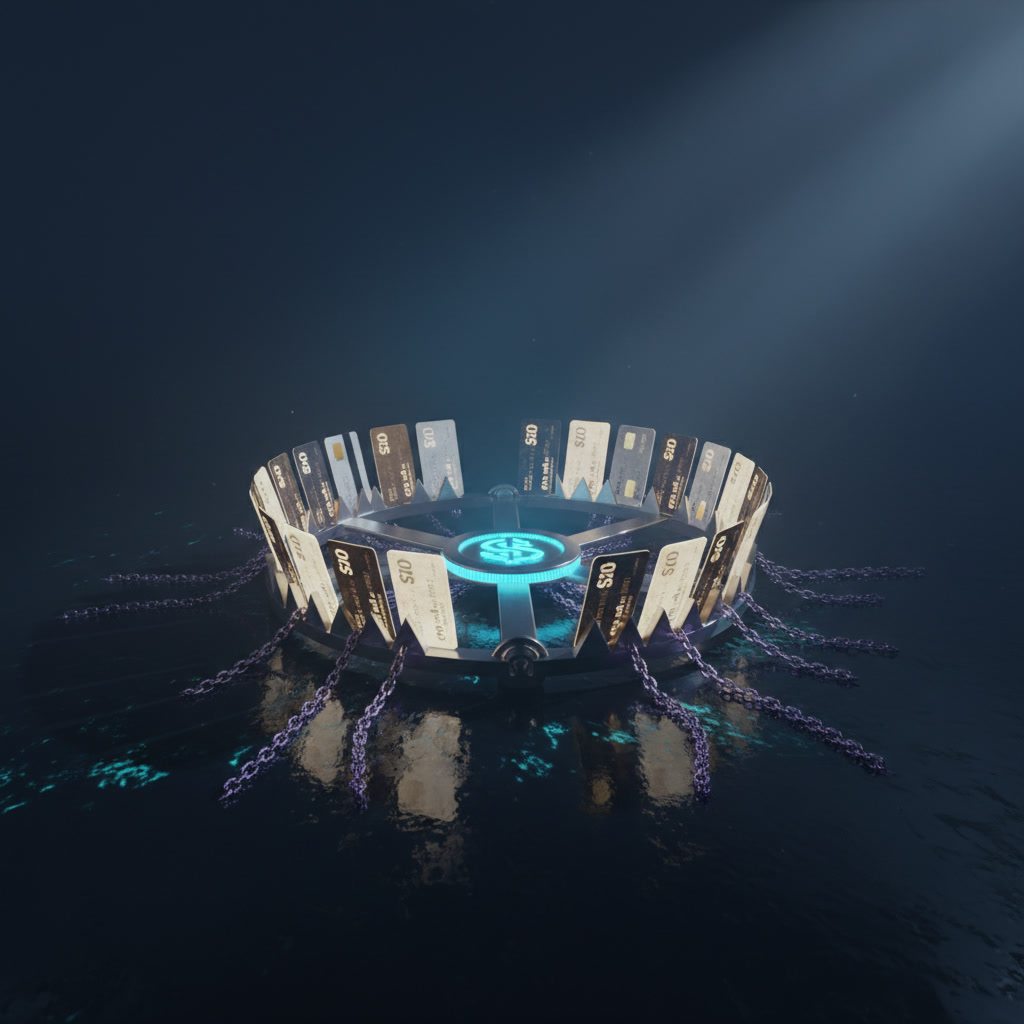

The $20 Trap

Here is the framework. I call it The $20 Trap.

Every major AI coding tool has converged on the same price: $20 per month. Cursor Pro, Windsurf Pro, Claude Code Pro, v0 Premium. All twenty bucks. GitHub Copilot undercuts them at $10 per month with 300 premium requests. The entry point looks cheap. That is the trap.

The $20 Trap works in three stages:

Stage 1: The Welcome Mat. Low monthly cost removes all friction. A solo developer or a two-person team can say yes without a procurement meeting. No CFO approval needed. Just a credit card.

Stage 2: The Credit Drain. You start using the tool for real work. Your credits deplete. Overages kick in at standard API rates. Cursor charges pay-as-you-go once your monthly credit pool runs dry. Windsurf moved from credits to daily and weekly quotas on March 19, 2026, forcing heavy users into a $200 per month Max tier.

Stage 3: The Workflow Cement. Your team builds shared rules, custom commands, and chat prompts inside the tool. Your CI/CD pipeline integrates with it. Switching now means rearchitecting deployment workflows, retraining your team, and losing months of accumulated context.

The $20 Trap is not about the $20. It is about the $200 per month you end up paying after your workflows are cemented in place. Simple scales, complex fails. And the complexity here is invisible until you try to leave.

The Lazy Way to Spend $2,847 a Month on AI Coding Tools

Let me show you exactly how this plays out in real dollars, because the numbers are kind of mind-blowing.

A team of 10 developers using a layered AI coding approach spends approximately $1,160 per month. That is the moderate scenario. Scale to 50 people and you are looking at $8,000 to $13,500 per month depending on whether you go Microsoft-first or best-of-breed. One documented case hit $2,847 per month from a single user running unchecked agent sessions. That is not a team. That is one person.

Here is the part most people miss. The average user actively uses only 42% of their paid AI subscriptions. Let that sink in. Teams are paying for tools they barely touch while simultaneously blowing past limits on the one tool they actually use.

The old way was painful. You paid a flat enterprise license, maybe $39 per user per month for GitHub Copilot Enterprise with 1,000 requests per user. Expensive upfront. But predictable. Your finance team could budget it in Q1 and forget about it.

The new way looks cheaper on paper. Pay-as-you-go. Credits. Tokens. Premium requests. Claude Sonnet 4.6 costs $3 per million input tokens and $15 per million output tokens. Opus 4.6 runs $5 and $25 respectively. Sounds granular and fair. But granular and fair also means unpredictable and hard to forecast.

This is literally the hard way versus the easy way, except the "easy way" has a hidden bill at the end of the month.

My read on this: OpenAI replacing fixed-tier plans with usage-based pricing for Codex teams is not about generosity. It is about removing the initial "no" from smaller teams who would never approve a flat enterprise license. Get them in the door at $20. Let the usage compound. The meter runs whether you are watching it or not.

And here is what nobody is talking about. Vendor lock-in is not happening through pricing anymore. It is happening through workflow integration. That means your deployment pipeline runs through their tool. Switching is not canceling a subscription. Switching is rearchitecting how your team ships code.

The "1+2 model" tells the story. Analysis shows that one primary platform plus one or two specialized tools outperforms broader portfolios. Teams naturally consolidate. And consolidation is just a polite word for dependency.

It is unclear whether the current $20 price floor is sustainable for vendors. If these companies raise prices after teams are locked in, the $20 Trap snaps shut completely.

There is also a quality problem hiding inside the volume. According to Veracode, 45% of AI-generated code contains security flaws. Failure rates hit 88% for specific vulnerability categories like cross-site scripting. More usage at lower prices means more flawed code, faster. The productivity gain is real, but so is the technical debt. Teams that skip code review because the AI "handled it" are building on sand.

2031

Pull back to the six-year view. Where does this pricing shift land by 2031?

Gartner predicts a 20% to 50% increase in developer output by 2027 from AI tooling alone. If that holds, the developer who matters in 2031 is not the one who writes the most code. It is the one who reviews, architects, and directs AI agents most effectively. Salary buys furniture, equity buys your future. And the equity play here is learning to manage AI-generated output, not just consume it.

The asymmetric risk is real. Software licensing gets cheaper. The silicon underneath it gets more expensive. That tension resolves in one of two ways: either vendors absorb the cost and consolidate (fewer players, deeper lock-in), or they pass it through and consumption-based pricing gets genuinely expensive.

Coder raising $90 million from KKR in the same week as OpenAI's pricing shift is not coincidence. It is a signal. KKR is not a venture fund chasing hype. They are a private equity firm underwriting infrastructure. Enterprise developer tooling is becoming a high-conviction category because the compounding effect is obvious: every developer hour saved compounds across every sprint, every quarter, every year.

The flywheel favors incumbents. GitHub Copilot's integration with Actions creates switching costs that compound with team size. Cursor's shared team configurations create coordination costs that grow with headcount. The longer you stay, the harder you leave. Impermanence is a useful concept here. Every tool you adopt today will either evolve into something indispensable or die. The question is whether you can afford the transition when it happens.

I think the winning strategy for 2031 is deliberate portability. Teams that treat AI coding tools as interchangeable layers rather than foundational infrastructure will have asymmetric optionality. The ones who wire their entire deployment pipeline through a single vendor's agentic features will move fast now and pay later.

What to Build This Weekend

You do not need to solve vendor lock-in this weekend. You need to understand your actual exposure. Here is a three-step exercise anyone can do.

Step 1: Audit your AI tool spend. Open your credit card statement. List every AI coding tool subscription. Write down the monthly cost and your estimated usage percentage. Remember, 42% average utilization means you are probably paying for tools you forgot you signed up for. Cancel what you do not use. This takes 20 minutes.

Step 2: Test a portable workflow. Pick one task you currently do inside your primary AI coding tool. Try doing it with a different tool. Solid, which launched this week, generates production-grade full-stack apps using Node.js instead of prototype-only outputs. Intent by Augment Code handles the full git workflow from spec to merged PR. Try one of these for a single task. See how painful the switch feels. That pain level is your lock-in score.

Step 3: Set a usage alert. If your tool supports it, set a spending cap or notification at 150% of your base subscription cost. Cursor's credit system and Copilot's premium request limits both have overage mechanics. Know your threshold before you hit it. If your tool does not support alerts, track it manually in a spreadsheet for one month.

The goal is not to avoid AI coding tools. The productivity gains are real. The goal is to adopt them with your eyes open. Get your reps in with multiple tools so you understand the switching cost before it matters. Learn in public. Share what you find with your team.

The $20 Trap only works if you do not see it. Now you see it.